Unpacking the Economic Internet with Fetch.AI CTO Toby Simpson

In the following interview, BTCManager caught up with Fetch.AI’s CTO Toby Simpson to get a better understanding of their project to empower IoT devices with economic agency. Things like a Tesla sports car, a dishwasher, a smartphone, and the predicted 20 billion devices that will exist in our world by 2022 is a huge opportunity for blockchain enthusiasts.

Technical Frontier

Fetch.AI, as the name would suggest, blends some of the hottest technology topics to leverage this army of machines. A stack that includes machine learning, blockchain technology, and, of course, artificial general intelligence, will, according to Simpson, not create a new Terminator, but instead, help us manage an overly-complexing reality.

After raising $6 million in a recent token sale on Binance’s Launchpad platform, they’ve certainly got at least a few fans.

Before joining the crypto-revolution, Simpson was building “bottom-up” systems for games like the life simulation Creatures series and was also an early developer of Google’s DeepMind. The arrival of tokenizing technology brought many of these ideas together, and thus, the birth of Fetch.AI.

BTCManager:

What was your background before entering the blockchain space?

Toby Simpson:

That is a very interesting story.

I come from a computer games background and an AI background where I started in the 90s writing games for the Commodore Amiga. While working there, one of the things I learned very quickly was that there was a big problem with the way in which I was developing software. The process was ending up hugely complicated and very difficult to manage.

Part of that comes from approaching problems from the top down, which is a typical human approach, where we see a problem and we come up with an approach that specifically solves it. Human beings don’t naturally fall into a position where they create the bits and pieces that can help operate to solve a problem, which is more of a bottom-up approach. I then had the privilege of becoming the producer of the Creatures series of games in the mid to late 90s.

And that changed the way I look at software. With Creatures, we modeled biological building blocks, like chemical emitters, receptors, simple biochemistry, reactions, biologically-inspired neurons. We joined all this stuff together and created a digital organism that was capable of learning how to survive in its environment, without any prior exposure to the problems it met. Now, I really love that.

I love that because actually, the computer was doing the hard work for the first time. We built a large population of simple things and those simple things were interacting with each other in a way that complex behavior could emerge from it. We didn’t have to specify any rules and that meant that the Creatures would be different, and they would have personality. But it also meant that we could breed them with a simple genetic code and get some of the advantages of this biological approach. We stole metaphors from biology and nature for the purposes of performing all this wonderful advanced computation.

https://twitter.com/simondlr/status/1037283556282834944

I was curious at the time as to whether or not that thoroughly bottom-up approach could actually be used to construct large scale virtual worlds. Because the thing I’ve always wanted to do in my life is to be able to put the matrix in my living room. I wanted to be able to go anywhere, anytime, with anyone and do anything. And I didn’t see any reason why that shouldn’t be possible. Of course, the reason why it isn’t possible is simply down to things like scale, and getting that amount of computing power to do it. But also the philosophical approach.

And in the 2000s, I spent a long time building this massive agent-based multiplayer online gaming engine called Alice. We built a real-time strategy game out of it, which was quite fun because you’re able to have thousands of players with thousands of ships, all throwing them at each other in real-time. And they could do so over a modem, not even broadband because that’s the way the Internet was back then.

We could do this because of our approach to building these agents which meant that network synchronization and other things were actually emergent properties.

Around this time, sort of the mid-2000s, I met Demis Hassabis, one of the founders of DeepMind. We got to talking about all of this, because I was able to build these worlds where you start with one agent, and then you’d have 248 and before you knew it, you’d have trees and plants and hedgerows, and it just happened out of nothing.

If you didn’t like the look of it, you could knock it down and build another one. And for me, this meant that I could build these virtual worlds where everything was real, rather than painted on scenery.

I often joke about that wonderful Simpsons episode where the fire exit was painted on the wall. But actually, most computer games are like that, most of what you see, most of that stunning, beautiful scenery, it’s not real, you can’t chip a piece of rock off and use it to make a wall or throw it at somebody. You can’t knock a tree down and make a log cabin, you can’t do any of those things. Because it’s all theory. It’s not real.

If it is actually grown from scratch, as we were doing in the mid-2000s, that’s more interesting, because people can do things without having to have rules to allow it.

(Source: Fandom)

I actually spent a few years at the beginning of the DeepMind story working with Demis and their extraordinary team looking at how biologically-inspired approaches could also work with other approaches as part of our journey towards artificial general intelligence. And during that time, I had also been talking to the other co-founder of Fetch, Humayun Sheikh.

We’d been speculating between the two of us as to whether or not it was possible to build a world that was big enough to represent all of the component parts of the economy in it.

Could we create autonomous agents that represented items of data, pieces of hardware, services, infrastructure, people, and could we use enough computing power to adapt to that world in real-time so that what any given agent saw was optimized for it?

It was a great idea, but back then we couldn’t figure out how to get the scale because we were potentially talking about billions of agents. On top of that, we also couldn’t deal with the identity issue. We didn’t have a method of value exchange or the incentive mechanisms that would make that reliable and actually behave in the way that we wanted it to. And we didn’t have enough computing power to crunch the numbers on that amount of data to create this semantic view of the world that we wanted to create.

Then, of course, along comes blockchain technology.

When blockchain came along, that felt to us to be the missing piece of the puzzle to a certain degree. Now we could get the scale that we wanted, because we could create a network of any size that we chose to, just by adding new computers. We wouldn’t have to trust each individual computer in order to include it, and suddenly, we have the computing power as well. Because once you’ve got a network of that magnitude, you can keep adding to it. And it just gets more and more powerful.

(Source: Reddit)

We also had the method of value exchange because that’s a fundamental side effect for providing integrity in these decentralized spaces. The identity issue was also resolved because we were sitting on individual unique private keys for all of the entities involved.

So, that meant that we could converge all of these technologies for the first time and meld AI multi-agent systems, digital worlds, and decentralized ledger technology to build the thing that we have been imagining. And because it’s a fully decentralized system that’s all open source and effectively owned by a community of network users, there isn’t the trust issue where no one would need to rely on a centralized entity with all that stuff.

We all came from this background with wanting to build these extraordinarily large digital environments and populate them with things to get stuff done for people.

This is the thing with life these days. It’s incredibly complicated and now we’re responsible for orchestrating our own digital life, and conducting all the individual component parts. It’s getting hard. Even when I travel from Cambridge to London, I have to use four or five different apps to get there in one piece. If I drop the ball on any of those, suddenly, I find myself standing on a train platform for a train that’ll never turn up. And it’s me that has to do that work.

It shouldn’t be the case anymore, the hard work should be done for us. We’re surrounded in all this data, information, knowledge, and computing.

And the results should be given to us without us having to go chasing them. The breaking down of the individual vertical silos of knowledge and capabilities so that they can share and work together to provide the solutions, that was one of the many attractive reasons why we wanted to do this.

BTCManager:

Before we get any deeper into this, I’d be curious to know what your definition artificial intelligence and machine learning is. A lot of people run off and write about artificial intelligence, but these two subjects, which Fetch is also working with, are pretty distinct.

(Source: Dilbert)

Simpson:

Yep, that’s true. And I’m sure that if you ask 100 people, you’ll get 100, slightly different answers.

AI is the broad subject area of anything that relates to machine intelligence in some way or form. And I guess the problem comes from the fact that it’s a huge area and you can’t say that you work in it without breaking that down a little.

Lots of people define it as machine learning, which is actually just statistical analyses of large data sets, but machine learning sounds groovier. When it’s got machine learning, it has a name attached to it. And there are lots of areas of that that are interesting. And many of those areas are interesting for Fetch.

There are lots of aspects of machine learning that we find very, very attractive, but also broad areas of artificial intelligence itself. And collective intelligence. When you can start joining large numbers, or large numbers of little bits of knowledge together to produce something grander, which, for example, is a key part of how we create this self-adapting world for the agents on Fetch.

Another example is something like embedding. With embedding, you can start throwing text into these recurrent neural networks, and end up with a reduced dimensional value. So you can plop them in space. Then you can see that the ones that are actually near to each other are probably related to each other. Now, what’s interesting about this is that you can use it to effectively to create semantic views on the world.

You can learn whether things are related to each other, without having to have any idea of what those things are, or if they have any fixed hierarchy that says, this has to do with the weather, or this has to do with transportation, you can just run them through these wonderful devices, and come out with this reduced dimensionality.

Something that pretty much everybody in the space will tell you is that none of these technologies exist in isolation. What ends up happening is you tend to find yourself combining them in different ways.

BTCManager:

In the case of Fetch, you and your team are building a technology that would effectively imbue something like a smartphone or an electric vehicle with economic agency.

Simpson:

I tend to look at things these days as populations of agents. I see the car itself, I see an agent that represents the person and I see agents that represent the sensors and other information that are in that vehicle. All of those things potentially have utility in the world.

And if you’ve got an environment that is acting, or as I’m fond of saying, this ultimate dating agency for value providers, then you’re much more likely to get better utilization out of that data as a result.

BTCManager:

If we consider a Tesla car, for instance, you would have maybe four or five agents.

Simpson:

Yeah, if not more.

And in your mobile device, for example, you walk around with a phone that’s got all sorts of really interesting information centers, and why shouldn’t you attach agents to that knowledge, and get the utility from it yourself, rather than going somewhere else? That’s dead interesting, because, as a sillier example, if a whole bunch of people puts their phone away at the same time on the same street in London, the chances are, it’s just started raining.

(Source: Art/The New Yorker)

With that kind of information, you can derive real utility. And it may well be that you’ll have 20 or 30 agents on your phone that you combine different pieces of information for different audiences all of which are relying on the Fetch network to take every opportunity possible to deliver that.

The wonderful thing is the agents that represent your data are being stored on the Fetch network, not the information itself. It’s storing agents and connecting them together, and the user is the one who’s always in control. Because if you’re not creating these agents, they don’t exist. You can also change the frequency and the accuracy of them too.

So, you can protect your privacy as you choose to. But it actually means that your information, your identity, and all the stuff that represents you are more controlled by you than they otherwise would have been.

There’s an enormous amount of value in this world, and we can be in a situation where you’ve got a network that is constantly learning how to connect a buyer and a seller together and constantly updating users’ preferences.

BTCManager:

And how is the Fetch network being monitored? Who are the people who operate nodes and verify the transactions between economic agents?

Simpson:

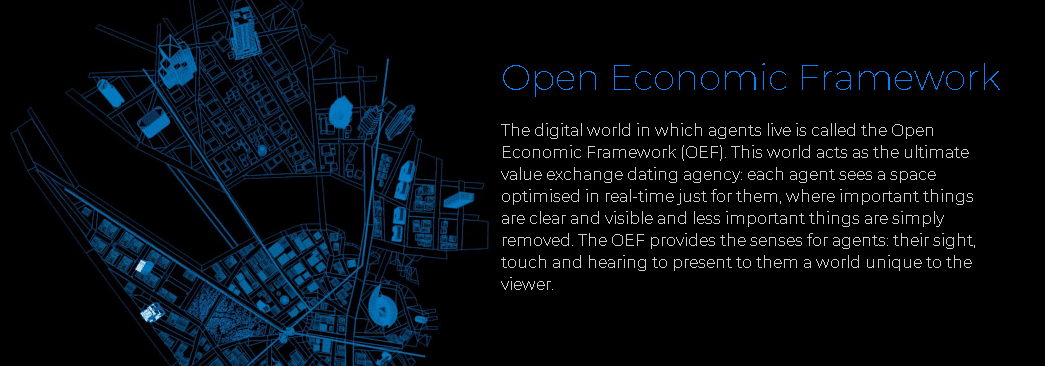

First things first, the Open Economic Framework (OEF) is a high-level layer above the underlying ledger technology that is effectively the interface to the digital world. It is providing autonomous economic agents with a method of finding each other and transacting.

(Source: Fetch.AI)

But it also gives them some other interesting capabilities relating to moving around the network. Now, obviously, this is also a peer-to-peer network as all the computers involved are connecting to each other and they’re connecting to each other on several different dimensions. One of those levels, for example, is geographical.

It is possible as an agent to position yourself in the world, but also to move around it. So if you’re looking for other agents that represent, say, stuff in London, then you can position yourself towards those items that represent London in order to be better positioned to find them. This is just one dimension for how the digital world plugs into the underlying network structures and underlying all of that we have our own unique smart ledger, which is a super high-performance blockchain.

It needs to be because there’s a lot of people out there who will boast about doing 50,000 transactions a second but won’t be able to tell you why. It’s just a number. Whereas Of course, if we’re sitting on billions of agents, all of which are doing these potentially low-value transactions with each other digitally, then quickly, we need very high throughput.

BTCManager:

It’s predicted that there will be 20 billion IoT items operating in the world. And if each item has maybe four or five sensory components, which you described earlier, that seems like a huge scalability challenge.

Simpson:

Yeah, you’re right. And that’s why we had to technologically design something that was capable of doing that, that had no theoretical scaling points. But that’s part of why decentralized ledger technology is very interesting as you can construct a workable decentralized incentive model to ensure that supply and demand appropriately relate to each other.

The big way in which nodes operate in the Fetch network generate benefits is by providing services to agents in exchange for tokens. Fetch offers to pay for the computing power that we’re using. But in a network that’s effectively a digital world, there are higher-level operations, like constructing a view of the world and moving yourself around the network.

Agents can be either passive or active. As an active agent, you can go out in the network and find what you want. Of course, you can still be passive and sit there and wait for the network to bring people to you, but the active agents can do some really interesting stuff including gathering a reward.

If the number of agents goes up, and there’s only a finite number of agents for any given node, there’s suddenly a demand for additional nodes. It then becomes profitable to set up a node at that point because there is a demand from the agent population in order to support them. And that in itself increases the overall computing power of the network, which means that you can scale up some of the more interesting decentralized computing problems.

DAGs don't solve any fundamental scalability problems. They solve latency problems at best, and in general I think DAG tech is overhyped.

— vitalik.eth (@VitalikButerin) June 10, 2018

It’s an incentive model in which people to set up additional computers that provide additional network capacity as the agent population increases. And the decentralized nature of it means that there’s no theoretical scaling point on the number of computers or nodes that can be added.

We have designed and built the scalable ledger and all of the facilities around it to be able to continue to scale to support the increased transactions as all of these devices potentially become Fetch agents.

BTCManager:

Basically, as more agents join the Fetch network, the more users will be incentivized to set up nodes and support the network.

Simpson:

Yeah, and as a bonus, every time they set up a node, they effectively increase the resolution of the underlying digital world, because you have an increased resolution on the peer-to-peer connections so that you can specialize a little bit more.

That’s when you start finding yourself with nodes that service Heathrow Airport and nodes that serve as a particular area in London. That means that the agents can position themselves far more effectively to make sense of what would otherwise become an increasingly busy world.

IoT is a really, really interesting space. You’ve got all of these completely unpatched, overly-complex computers on the Internet, that will never be secured, will never be patched. They’re just sitting there as attack vectors because a lot of them are already inside networks. If you can break in, then you’re in, you can do all this stuff, and there is no incentive in the system to force people to patch these things. There’s no way that you can prove whether the device that you’re talking to is secure and up to date or has been running the right software.

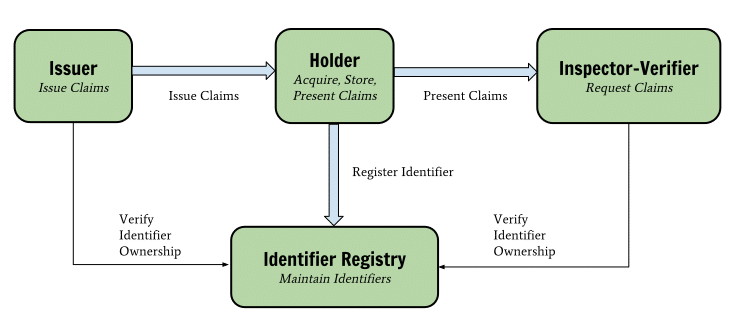

When you start looking at Fetch-type systems and public ledgers, and you start combining it with technologies like verifiable claims, for example, then suddenly, you’re in a different world, because you can choose to talk to IoT devices that can certify with a remarkable proof that they are up to date.

(Source: ldapwiki)

I am absolutely shocked on a daily basis to discover just how many of these things are basically unpatched Linux boxes that have no firewall set up with a surprising number of services that they don’t need and will be cut loose by the manufacturer when they move on to the next version.

But when you come, come up with a network that indirectly or directly incentivizes all of these things, and combines two technologies to provide reversible, verifiable proof, then you can start having trustworthy conversations between devices. That means that you’re much more likely to have a conversation with a device that can prove that it is unmodified, and that means as a value provider on a network, if you want people to talk to you, then you strongly encouraged to do these kinds of things.

I think that’s ultimately one of the feedback mechanisms that could help before it becomes a real horror.

BTCManager:

How does one become a node operator?

Simpson:

Download the software compile and run it.

BTCManager:

Just like that?

Simpson:

Yeah, it’s open-source, it’s an open network. Once you’re on the network, the mechanism provides a method for reaching consensus on how you add things to the end of agents. Because there’s trust that is built on many, many levels. It’s in your network behavior, the way in which you interact with other agents, and the network will affect other nodes trust values of you.

And Fetch has got a whole pile of wonderful mechanisms, some that we’ve built, some which we’re building this year, which can expose that trust information.

Agents don’t have to connect to just one node, this isn’t the real world where you can’t be in two places as a person, because it’s physically impossible. But as a digital agent, you can connect to five places at once, if you want. And you could do so on different dimensions. It’s very hard to be “islanded” by malicious nodes, or three or four nodes that try and cut off a chunk of agents from the rest of the network.

I’ve been in this position where there are these multiple dimensional connections between nodes that is actually very, very hard to attack in that way. Download the software and rock and roll. And that’s pretty much it. Same with being an agent.

Bear in mind that a node is sort of an agent in the sense that it has a wallet, an address, and it can receive tokens. Anybody who wishes can grab the software, build an agent, attach it to something, create a key pair, give that agent some tokens to spend to be able to get the service that it wants, and off it goes onto the network doing its stuff.

When you start combining that with verifiable reversible claims against your identity, then you’ve got that trust mechanism. You can prove that an agent is what it says it is, and that it provides what it says it does or is where it’s supposed to be, which means you’ve got that self-service trust. You could travel the whole blockchain and find out who’s got what, independently of any third party.

BTCManager:

Is there a possibility that these agents would be able to connect to another blockchain network? Fetch. AI just joined the Trusted Internet of Things Alliance and one of the executive directors is Zaki Manian who helps develop Cosmos, which is a huge Interoperability solution.

Simpson:

This is one of these rather wonderful spaces where it’s a bit like the Internet in the mid-90s, where we’re all poking around with something that we know is going to change the way we live our lives. But we can’t really put our fingers on all the details just yet. So there’s a lot of investigation. There’s a lot of innovation going on here. And there’s a lot of people trying to solve different problems in different ways.

One of the ways in which Fetch connects with other systems is through gateway agents. This is something that we recognized very early on. In the original introduction paper that we wrote at the beginning of last year explained how gateway agents could provide services to connect all of these different things together to be able to expose the features of other platforms. Other platforms can benefit from being able to find other things to potentially transact or work with on the network.

There are a lot of areas where there’s some very positive overlap between all these things, particularly related to the identity space. If you start attaching these things to autonomous economic agents, then both protocols can benefit in some way.

BTCManager:

You have also raised a huge $6 million in about ten seconds on Binance’s Launchpad and I’d be curious to know how that $6 million will be put towards further development or other ideas.

https://twitter.com/cz_binance/status/1100034248797343745

Simpson:

We’ve got a team of over 40 world-class people here building this technology, including economists and everybody else that we need to make this work. Once you start looking at a team like that, and all of the offices and the labor costs and everything else, you can see how potentially quickly money could be spent.

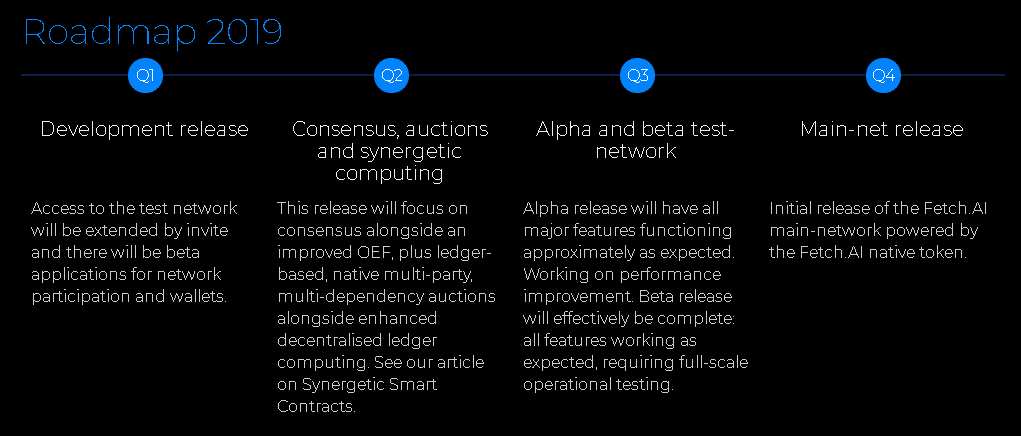

This is about building out the roadmap and making sure that we deliver on and exceed everybody’s expectations for heading towards a mainnet at the end of the year. We want to ensure that we can make that journey with appropriate room to spare as people would expect, nothing less. We have an aggressive schedule, we’re moving at the moment.

(Source: Fetch.AI)

Fetch exists as an ERC20 token, which means it exists on the Ethereum network. You can use those Ethereum tokens to connect to the test network, and to build an experiment and play and deploy agents and all of the other utility operations on the Fetch testnet. And by the end of this year, we will have our own main network native tokens, and there will be a conversion process between the ERC20 and the Fetch native token.

The money that we have raised is about taking that journey and beyond into next year.

BTCManager:

Are there ethical concerns for viewing an army of machines whose sole purpose will be to fulfill free market capitalism? I think a lot of people might be a bit concerned that will have basically robots whose sole intention is to maximize value in whatever way that looks. Are you guys concerned about what that reality could be?

Simpson:

What do you think the big concerns about reality actually are?

In the end, where we’re building is a large collection of digital representatives that work together to solve problems for you. This is about managing complexity better. It’s about giving IoT devices the ability to be able to position themselves correctly so that we get better utilization out of everything. It comes down to improving efficiency. It’s the equivalent of rolling a red carpet out in front of us.

It’s about lots of small pieces of information and knowledge that are collectively useful and available to everybody on the network. That’s how I see the Fetch world that we’re building. I see it as a greatly positive thing for the way that we live our lives.

I know that there are worries, and it’s right to consider these things and consider the possible outcomes. Human beings are nothing if not a little bit insecure. We tend to look at any other kind of intelligence or automation as something that’s coming to get us. I would call that the “Terminator Effect.”

But that’s not necessarily the case. There is a big separation here between people’s worry about artificial general intelligence and what that is compared to a large network of relatively simple digital entities in a world that optimizes their ability to get things done. That’s how we envision an economic Internet for the machine economy.